There's a pattern emerging that worries me, and it's not AI itself. It's what people are doing with it.

I see teams shipping real products, with real users and real money on the line, treating AI-generated code as if it were gospel. It works? Great, merge it, deploy it, move on to the next feature. And honestly, I get the temptation. It's fast, it's satisfying, and that dopamine hit is very real when you describe what you want, the model delivers, the tests pass, and the PM is happy.

But there's a difference between vibe coding a side project that no one else will ever touch and shipping a product that needs to grow and change over time, with new features every week, different people working on the same codebase, and that inevitable day when everything breaks and someone needs to understand what's going on quickly. That distinction matters, and I don't think most teams really realise it yet.

None of this is new, though.

Writing code without caring about quality, scalability, or performance has been around since long before AI was even a concept. It's the oldest pattern in our industry: "Make it work. When? Yesterday."

Anyone who's worked on a product long enough knows what comes next. Sprints start slowing down, features take longer, and bugs get harder to track down. The code that was "quick to build" quietly becomes the code that's impossible to maintain.

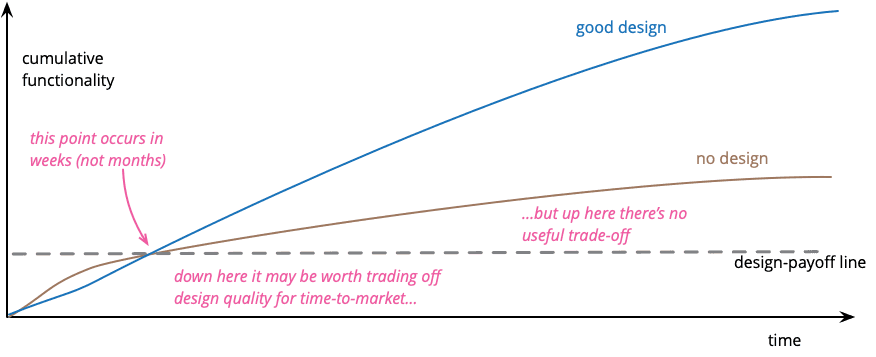

There's extensive literature on this: Martin Fowler's "Design Stamina Hypothesis", Ward Cunningham's "Technical Debt" (the original 1992 OOPSLA report is hard to find online, but Fowler's write-up is the best reference out there), Brian Foote's "Big Ball of Mud". Decades of people writing about the exact same thing.

What AI is doing is accelerating this cycle, for better and for worse. The implementation cycle is absurdly faster: what used to take days now takes hours, what took hours takes minutes. That's genuinely transformative. But the cycle of accumulating technical debt is also absurdly faster. If before you wrote bad code at human speed, now you generate it at inference speed, and the mess that used to take months to form can show up in weeks.

This is where I think the conversation needs more nuance than it usually gets. It's not really about AI. It's about using it without stopping to think.

How we approach it at ustwo

At ustwo, we use AI tools extensively (Claude Code included), and they've genuinely changed how we build. But we've been deliberate about how we integrate them into our workflow, because we've seen what happens when you don't.

The core idea is pretty simple: design and architecture still happen in human heads, and the AI accelerates implementation, not decision-making. When we accept generated code, we know what's in it, why it's structured that way, and what we'd change if we had more time. In practice, that means investing in context engineering. We maintain CLAUDE.md files, architectural documentation, and clear patterns so that what gets generated actually fits the product instead of just compiling and passing tests.

It matters because the alternative is what I see happening in other teams: people shipping code they don't fully understand, rationalising shortcuts with "the AI can refactor it later," and accumulating debt at a pace that would have been physically impossible two years ago.

The duplication trap

There's a line I keep hearing that concerns me: "let it duplicate code, AI refactors fast when needed."

And sure, maybe it does. But will you remember to ask? Will someone on your team, six months from now, even know there's a duplicate to refactor?

The thing about duplicated code is that it goes beyond aesthetics or DRY as a nice acronym to put on a slide. When you have the same logic living in two places, you eventually fix a bug in one and forget the other, or change a business rule in one function while its copy keeps the old behaviour. The user ends up seeing two different behaviours for what should be the same thing. When that happens in a large codebase with multiple people working on it, finding the root cause becomes genuinely painful. AI might help you find it, but AI also helped you create it in the first place.

The point isn't "never duplicate code." Sometimes duplication is the right call and that's fine. The point is to make it a conscious decision rather than laziness you've convinced yourself is fine because a model can clean it up later.

Who's actually responsible?

What hasn't changed, and I don't think will change, is that the responsibility belongs to whoever makes the commit. AI doesn't commit. You do. And when things go sideways at 3 AM, no one's paging the model.

There's nothing wrong with moving faster. The problem starts when speed becomes an excuse to stop thinking. That's technical debt with compound interest, and compound interest (as any tired adult knows) is an efficient way to turn a small thing (in this case a problem) into an enormous one.

Software is always evolving. Code written only to solve today's problems has always had a short shelf life — no matter how powerful the tools become. The tools themselves will keep changing, but the principles that guide good software design tend to endure.

I use AI every day and I'm not interested in going back. But every time I accept a suggestion, I try to make sure I could explain that code to someone else on my team if they asked me about it tomorrow. It's not a high bar - it's the same one we've always had.